Why Testing Web 2.0 Sites Requires Real Browser Measurements

We commonly hear the term ―Web 2.0, but if you ask someone, ―What is Web 2.0? you will get many different answers. Some people call it a technology, but it is really the second generation of Web development and design.

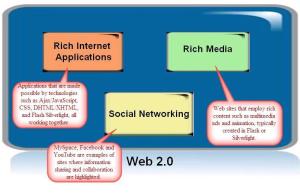

This second generation includes tools that enable better collaboration, communication, and interoperability on the World Wide Web. Social networking sites such as MySpace, Facebook, and YouTube are good examples of the information sharing and collaboration that we call Web 2.0. Among Web 2.0 applications, there is a class of Rich Internet Applications that are made possible by technologies such as Ajax/JavaScript, CSS, DHTML/XHTML, and Flash/Silverlight, all working together.

Nowadays, the most engaging Web applications rely on the browser to also execute code and redisplay content, often requiring the browser to process code that requires a desktop engine, such as JavaScript engine built into the browser, or a Flash player installed as a Web plug-in. The Web operating system is no longer just resident on the server, it is also on the user’s desktop, in the client-based browser.

Related Posts

Monitoring The Performance Of Web Applications And Properties

Website Performance Monitoring is a discipline for IT operations and Ecommerce business management teams that includes both operational monitoring and end-user experience monitoring.

Operational Monitoring helps operations teams implement dependable endto- end performance monitoring for critical Web applications as a turn-key service, and is achieved with high-frequency application monitoring that detects response time and availability issues in real time.

End-user Experience Monitoring is the domain of both operations teams and performance analysts, who need to most accurately measure the web performance of critical transactions, including those involving interactive Flash, from the true end user’s perspective. This gives full visibility into the user’s experience by providing real browser measurements of the responsiveness and availability of RIAs.

Meeting Or Exceeding Consumer Expectations In The Web 2.0 World

With the Internet being a medium capable of delivering rich and extremely interactive experience, it poses newer challenges. Users look and expect excellent technical quality and consistent performance, free of errors and technical issues. Technical issues and errors are seeing their market share erode as users can easily move to competitors translating to less satisfaction, poorer brand perception and higher costs. With website monitoring services, the task gets much easier and focused. The combination of new technology and higher user expectations creates significant challenges to managing the online experience.

Site owners can no longer rely on simplistic data center measurements of uptime or server performance to understand their end users’ experience. No longer will measurements of single pages provide a view into the end-to-end performance of the user online. Web 2.0 promises substantial gains over older communication tools in the areas of interaction and relationship building and in creating applications that are composed of others. The challenge in the Web 2.0 world is to keep meeting or exceeding consumer expectations with web performance and deliver growth in a complex technology environment.

Meeting User Expectations With Web Performance

It is quite a challenge to achieve optimum, fast website performance. The challenges are in the following areas where today’s web users have high expectations, organizations want to provide more functionality and also wanting to cut down on infrastructure costs. There are some best practices which can be used to measure and revise strategies. We need to measure key objectives of users, measure the site usage and the different geographies where the performance varies. The infrastructure elements that are affecting your site’s performance.

To meet users requirements, we will need to architect web pages for speed! Few things to point out are to minimize server requests, compress data, usage of page analysis and web page monitoring tools, software’s that can improve page speed, etc. Other than these, caching smartly will eliminate bottlenecks and this needs to be done from your customer’s perspective.

Business Value of Web Performance Monitoring

Since most people in business have at least a passing affinity for money, I think this is a relevant topic for examination. While the business impact of web performance is certainly not a new topic, I haven’t yet found any public material that tries to do an analysis for Web Performance Monitoring (WPM). In this post I plan to talk about the impact of Web Performance Monitoring (WPM) on operational metrics, such as Mean Time to Identify (MTTI), which relate to failures or problems on web property.

The argument goes that these metrics, commonly tracked by IT and operations, are good proxies for business costs and that WPM will improve them. Thus as a consequence, WPM will improve the business bottom-line.I’ll also address the more complicated topic of how much value this might be. Let me caveat this outright that this is an illustrative example and a generalized one at that. However, this can be useful to you as a framework for completing this analysis for your own business.

Measuring and Monitoring Web 2.0

Inaccurate data undermines the effectiveness of any program of systematic web performance management and causes performance-tuning skills and resources to be applied in ways that are not optimal. It can also lead to unproductive interdepartmental conflicts and disputes over service-level agreements with internal or external service providers when staff question the accuracy of the data, or discover discrepancies in data from different sources.

On Web 2.0 sites, personalization options allow customers to tailor experience of a site to their individual preferences, and sites are carefully

designed to download and display contents efficiently and successfully major browsers. Because customers’ experience depends on their

connectivity, sites may even adjust their content based on the browser’s connection speed. Measurement data must reflect this diversity.

Web performance of streamed audio and video must be considered separately from that of other Web application content, because its delivery

infrastructure and the Internet protocols it uses are different.

Web performance with streaming media

Streaming media is growing in importance as a business tool for both internal and customer-facing purposes. New media and entertainment applications have been joined by education, corporate and government information, business training and travel replacement in the list of factors driving streaming media’s growth.

Along with the growth, however, have come significant challenges as streaming media points out to corporations and individuals the limits of their information infrastructure. These limits crash against the users’ demands for smooth video, clear audio, and performance levels specified and guaranteed by contract. Media providers seeking to provide consistent quality of service face a daunting array of web performance issues, caused by lack of last-mile broadband build-out to service interruptions and carrier quality of service problems.

Delivering a high-quality user experience is often a significant enough obstacle to prevent organizations from moving forward with a streaming media strategy. Most of the challenges revolve around acceptable performance, either achieving it, or understanding the impact on the performance of the enterprise network as a whole of delivering adequate performance. First, IT Managers must understand the technical distinctions between streaming media and other technologies implemented through their networks. Second, there are solutions

that exist today to help address these distinctions such as multicast and Content Distribution Networks (CDNs). Finally no technology implementation is complete if you can’t measure what you manage. Given the additional challenges and complexities associated with streaming, an intuitive streaming management tool measured from the user’s perspective is needed to validate performance.

One major part of the performance management problem with Web technologies such as streaming media is the nature of the Internet itself—it is impossible to say from one moment to the next precisely which links will be traversed in sending data from one computer to another via the Internet. Another significant part of the problem is in defining precisely what “acceptable performance” means from the end-user perspective.

Performance management tools and an accurate measurement methodology exist today that can help overcome these obstacles.

Beyond Performance Monitoring

The major difference between systems management and website performance monitoring is that the former is conducted inside the company’s firewall and the latter is conducted from outside the firewall. However, as described, the methods share a basic feature: both are performed by IT staff with owner-operated monitoring software. In other words, an e-business does all the monitoring by itself.

An owner-centric approach suffers from a fundamental bias, at least with respect to performance monitoring. Although e-businesses need to supplement the internal perspective of systems management, performance monitoring can’t do the job effectively if it is conducted with owner-operated tools. Given the realities of the Internet and the pressures of a competitive marketplace, web performance must be monitored by a neutral third party with global resources and a comprehensive, well-tested, credible methodology. This is precisely the service Keynote so effectively provides to help e-businesses improve key Web application service levels, maximize revenue, and effectively manage costs.

E-businesses use IT to enhance online performance

How can an e-business use IT to enhance online web performance and increase revenue while also controlling costs? One obvious strategy is to focus internally on the systems that deliver content and functionality. To this end, most organizations originally invested in tools to monitor website and the hardware components of their IT infrastructure. These tools worked fine in a contained mainframe environment where functioning hardware was a reliable indication of customer experience. However, as companies moved to distributed servers running Web applications, it became important to also monitor application software characteristics, e.g., the length of time to run a database query or load a Java servlet. The idea is to use these so-called internal systems management tools to detect problems quickly from within the firewall and respond efficiently, thereby diminishing the external impact on service quality and customer satisfaction.

While efficient systems management is important, many IT professionals have recently recognized that it does not always capture customer issues. There are a variety of reasons for this, but the basic deficiency is that speculation about customer service is inferred indirectly from measurements of internal components and applications.

Source: http://keynote.com/docs/whitepapers/ThinkBeyondSoftwareMonitoring.pdf